Assessment in Secondary Education, is it formative and shared? Exploring perceptions of professionals and future professionals in Education

¿Es formativa la evaluación en Educación Secundaria? Explorando percepciones de profesionales y futuros profesionales de Educación

Sonia Asún Dieste, Mª Rosario Romero-Martín, Esther Cascarosa Salillas, Isabel Iranzo Navarro

Assessment in Secondary Education, is it formative and shared? Exploring perceptions of professionals and future professionals in Education

Cultura, Ciencia y Deporte, vol. 18, no. 55, 2023

Universidad Católica San Antonio de Murcia

Received: 24 july 2022

Accepted: 13 january 2023

Abstract: The aim of this research was to explore the perceptions of education professionals and future professionals on the existence of formative assessment in secondary education. A qualitative and quantitative convergent parallel mixed method study was designed. The participants were 8 Secondary Education teachers who collaborate as tutors in the Teaching Master’s Degree at the Spanish public university and who took part in a focus group, and 60 pre-service students who were completing a practicum in schools for this same Master’s Degree and who answered a questionnaire. The results showed a great diversity in perceptions of the implementation of formative assessment. However, the use of summative assessment seemed to be perceived as predominant in the high schools studied, as well as performance tests and examinations. In conclusion, these means of assessment are still consolidated by traditionalist conceptions, both of teachers and of students and families, that are limiting the progress of formative assessment in schools, while innovating and engaged teachers try to foment their implementation.

Keywords: formative assessment, assessment methods, secondary education, initial teacher training, higher education.

Resumen: El objetivo de esta investigación fue explorar las percepciones de profesionales y futuros profesionales de la Educación sobre la existencia de la evaluación formativa en Educación Secundaria (ES). Se diseñó un método de investigación mixto paralelo convergente, cualitativo y cuantitativo. Participaron 8 profesores de Educación Secundaria que colaboran como tutores en el Master de Profesorado de una universidad pública española en un grupo de discusión y 60 estudiantes en prácticas en centros educativos de ese mismo Master que respondieron a un cuestionario. Los resultados evidenciaron gran disparidad en las percepciones sobre la implementación de evaluación formativa si bien el uso de la evaluación sumativa pareció percibirse como mayoritaria en los centros de ES estudiados, así como los exámenes y pruebas de ejecución. Se concluye que estos medios de evaluación se mantienen consolidados por concepciones tradicionalistas, tanto del profesorado como de estudiantes y familias, que limitan el desarrollo de la evaluación formativa en los centros educativos, mientras los docentes innovadores, implicados y entusiastas, intentan hacerla emerger.

Palabras clave: evaluación formativa, métodos de evaluación, enseñanza secundaria, formación del profesorado, educación superior.

Introduction

At the end of the last century, the intense social, economic and cultural transformations that occurred motivated international organisations (OECD, Council of Europe, UNESCO) to define a common framework of aptitudes that should be acquired by citizens of a knowledge society, to allow individuals to develop in a changing world.

The OECD project Definition and Selection of Competencies (DeSeCo) was generated within this framework (1999). Its aim was to establish a compendium of key competencies which every individual should acquire and which should go beyond the particularities of each specific culture. The European Union in its programme Lifelong education and learning adopted a framework of eight competencies in educational subjects, and recommended that member states incorporate them into their educational programmes (European Parliament, 2006). In Spain the Organic Law on Education (LOE) from 2006 introduced the concept of basic competencies as an essential curricular element in non-university education.

The incorporation of competencies as a curricular element meant that since then there has been a progressive adaptation of the traditional elements of teaching programmes. For Toribio Briñas (2010) this adaptation should involve: (1) reformulating objectives in terms of capacities in a more operative manner; (2) defining multifunctional, transferable and dynamic contents, as the learning acquired should transcend concrete situations; all in a context of transversality and integration of knowledge; (3) designing learning activities within a wider context, that is, not limited solely to the classroom; (4) in methodology and organisation, creating spaces for cooperation and integration among groups from different levels; as well as facilitating the development of personalised learning itineraries according to the students’ needs; and (5) in assessment, breaking down its elements and defining clear assessment indicators. The “assessment procedures should be adequate for the curricular model we are referring to, thus leaving aside the almost hegemonic model of exams” (p.43). Moreover, for the mentioned author the assessment criteria should refer to the key competencies, from which the assessable learning standards will be defined for the end of each cycle (LOMCE, 2013), even though we have to remark that in the new educational law, LOMLOE (2020), these standards have been given the nature of guidelines.

Competencies are the predominant feature of the current educational system in secondary education (SE). No quality programme can organise its elements in a disconnected fashion, but should structure an organised system according to clear conceptual coherence, taking into account Biggs’s constructive alignment (2005). According to this model, programming for competencies, is not coherent with traditional assessments based on exams (Toribio Briñas, 2010), as in these, the student is solely an object of assessment. However, traditional assessment seems to predominate according to the interpretation of the students themselves, who identify all assessment with a mere written test (Hernández Abenza, 2010).

In contrast, the primordial characteristic of an assessment which is aligned with a competency-based model is its formative character. The aim in this model is “to improve the teaching-learning processes that take place in the classroom” (López Pastor, 2011, 35), so that the student perceives that the assessment serves to regulate his or her learning process. This type of assessment overcomes the limitations of the traditional models characterised by implementing assessment instruments that are inadequate to articulate the complex task of assessing the acquisition of competencies by the students and help them in their learning.

The change from traditional to formative assessment in SE is inescapable. Formative assessment prevails as one of the currents which refutes the ideas of traditionalist assessment. It articulates the idea of focusing attention on the students and the implementation of better teaching-learning processes where feedback is a fundamental factor (Nicol & MacFarlane, 2006). Many studies highlight the importance of this type of assessment as it provides suitable and understandable information, makes it possible to solve learning problems, positively affects motivation and maintains the student’s interest in improving his or her performance (Burke, 2009; Carless, 2006; Carless et al., 2011).

Consequently, formative assessment, which is replete with meaningful continuous and well-thought-out information, adapted to the students, provides authentic and careful information, as well as underlining the students’ participation. A large number of successful experiences have been published on this topic, which have applied assessment models centred on student participation (e.g., Barrientos et al., 2019; Gutiérrez, 2017; Hernando et al., 2017; López Pastor & Pérez Pueyo, 2017), that show that self-assessment and peer assessment, assessment strategies frequently used to facilitate formative assessment (López-Pastor, 2005), have given excellent results.

Among all the educational agents, the aptitude and attitude of the teachers towards assessment play a fundamental role However, Vázquez Cano (2012) states that the teachers in SE are “reticent to conducting a more systematic assessment, which is rich in comments and applicable to the new assessment philosophy based on competency learning” (p.30). Without a doubt, the beliefs of the teachers about assessment influence the administration and type of assessment implemented. A teacher often presents different, even contrary, conceptions of assessment that hinder the change to more innovative models (Remesal, 2011). The teacher’s conceptions come from prior experiences and the educational context in which he or she has developed (Hidalgo & Murillo, 2017).

It should therefore be understood that initial teacher training is the ideal context for fomenting formative conceptions of assessment in the students. For this, the experience of good practices is fundamental (Zabalza, 2012) to provoke their transfer to future professional contexts (Hamodi et al., 2017; Lorente & Kirk, 2013). As Gimeno-Sacristán (2012) indicates, teachers tend to reproduce what they experienced in their initial training. Thus, how they experienced assessment as a student, “determines the conceptions of the students themselves and of the teachers” (p.117). Coll & Remesal (2009), after carrying out an in-depth analysis of teachers’ conceptions of assessment, conclude that adequate training of future SE teachers is necessary in which they should discover useful tools for the development of formative assessment. Thus, it is a question of training the teachers so that they come to understand the assessment as a tool which makes it possible to discover what the student has learned and not as an irreversible grading process (Álvarez Méndez, 2001), or an instrument of power to cling to (Vázquez et al., 2016).

In short, it seems evident that the predominance of educational models where the students occupy a secondary position should be reversed. For this, the future teacher should be trained to appreciate and accept the advantages of formative assessment; training that overcomes conceptions and reticence to the change towards innovative models.

The aim of this article was to explore perceptions of the existence of formative assessment in SE starting from two complementary points of view: on the one hand the opinions of the professionals in SE and, on the other, the perceptions of the future professionals who carried out their practicums in schools. The latter are an unusual source of information and we consider that they can contribute an interesting counterpoint to traditional information paths. Moreover, it is fully focused on Initial Teacher Training as the literature seems to recommend.

Methodology

Design and context of the study

A qualitative and quantitative convergent parallel mixed method study was designed to better understand the research topic (Creswell & Plano, 2011) under the prism of the combination of paradigm attributes. Given the complexity of the phenomenon (Bisquerra, 1989; Reichardt & Cook, 1986), the positivist (Bunniss & Kelly, 2010) and interpretative approaches were combined (González Monteagudo, 2001).

The reference context was the Teaching Master’s Degree at the University of Zaragoza. All the school teachers in Aragon are invited to participate in it as tutors and every year the students from the different disciplines carry out their practicums in the schools that want to collaborate in the aforementioned course in this autonomous region.

Participants

The participants in the qualitative study were eight intentionally selected SE teachers (Cresswell, 2012). All the teachers were tutors of students of the Teaching Master’s Degree at the University of Zaragoza. There was variability as regards gender (6 women and 2 men), and type of school (4 private and 4 state). For the quantitative study, students from the Teaching Master’s Degree at the University of Zaragoza were contacted by e-mail. Of a total of 499 who were invited to participate, 60 (54% women and 46% men) accepted.

Research instruments

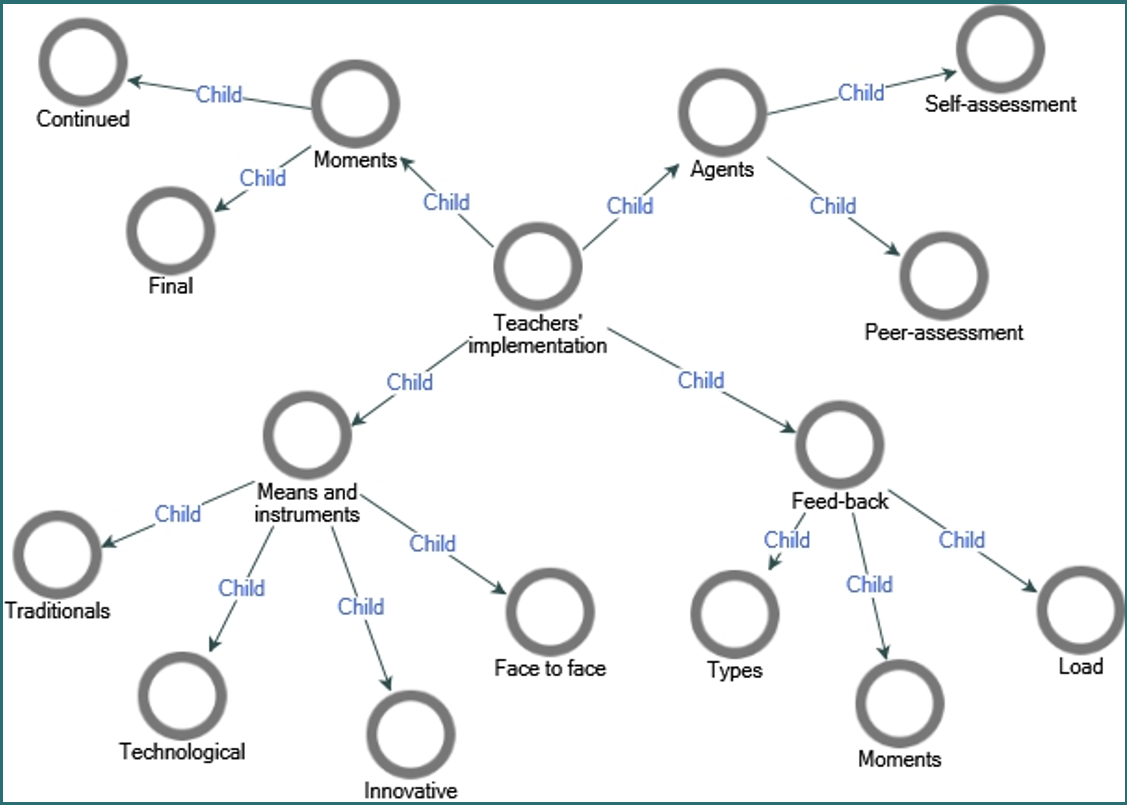

As part of the qualitative strategy, a focus group was formed with the SE teachers. Four areas of observation were determined to explore the perceptions of the formative assessment present in SE which were agreed with the research team: assessment systems, feedback, assessing agents and assessment instruments. A deductive-inductive method was followed and the four categories initially anticipated in the areas of observation were established (agents, feedback, means and moments) as well as a new category which emerged from the data related to the teachers’ beliefs.

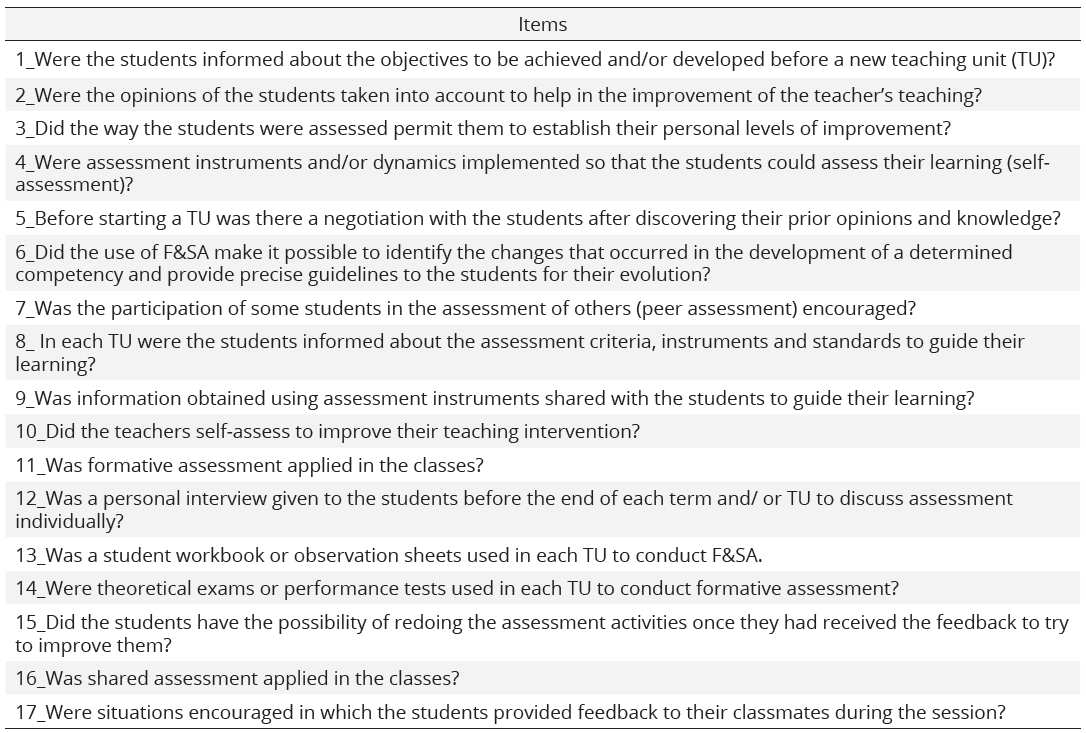

Following the quantitative structure, a questionnaire of perceptions of formative assessment was used adapted from the Perception of the use of Formative and Shared Assessment questionnaire (Espinel, 2017). It was composed of 17 items on the perceptions of the students of the Master’s Degree in their practicums in the schools. The answers were scored on a Likert scale of 5 points: (1) never; (2) almost never; (3) sometimes; (4) often; and (5) always (Table 1).

Items on the questionnaire

Procedure

All the participants agreed to take part having been informed of the characteristics of the study, of the guarantee of the confidentiality of their data and opinions, and, of compliance with the ethical standards approved by the corresponding ethics committee. With regard to the focus group, initially the inclusion criteria were defined for the participants and those selected were contacted; a meeting was held in which their consent was requested for the recordings and they were informed that they would receive them to be able to confirm that the data were correct; the text of the contents of the meeting was transcribed to text, the data were processed and the results analysed. Credibility was fomented with the report of an external woman observer with whom the data were triangulated; teachers who were experts in qualitative research were also consulted. Regarding reliability, the exact words of the focus group were transcribed, a rigorous check was conducted on the process that had been followed, the information was coded using NVivo8 research software and the results were sent to the participants who could make comments and qualify the final interpretation of the results (Lincoln & Guba, 1985).

Regarding the questionnaire, at the beginning recipients were informed of the purpose and the commitment to anonymity and were asked to consent to the use of their replies for academic purposes. Validity and reliability were ensured with the review of the contents of the questionnaire by expert teachers from the Shared and Formative Assessment in Education Network (REFYCE in its Spanish acronym) whose contributions led to reducing the number of items and improving aspects of contents and wording that were included in the final version. Internal consistency of the instrument was estimated with Cronbach’s Alpha giving a general value = 0.9, considered very high and optimal, and it did not appear that the elimination of any item improved the global value. It was administered as a google form during the last two weeks of the course.

Data analysis

The qualitative analysis was performed with the NVivo8 programme. Open, axial and selective coding was conducted (Strauss & Corbin, 2002) using the constant comparative technique (Flick, 2004). The descriptive quantitative analysis was performed with SPSS-v22 to calculate the mean, standard deviation and frequencies for each item on the questionnaire.

Results

The results are shown under the following headings:

(1) presence of formative and shared assessment; (2) information tasks and attention to the assessment; (3) participation of students and assessment agents; and (4) assessment means and moments.

Quantitative data. Frequencies, means and SD of the items on the questionnaire

On the presence of shared and formative assessment.

The teachers confirmed the diversity and difficulties for applying formative assessment; they stated that the students are not motivated or interested in accepting it, both they and their families give priority to exams; they highlight that even the norm does not facilitate it, as the standards of learning do not include attendance, participation, interest or attitude.

…but we also value participation in class, etc., we have to be very careful and monitor them because some parents do not understand that you have passed one child and failed another when they perhaps have the same mark in the exam, and then they pounce on you… It is very dangerous regarding the marks that are not the final ones. (Section 0, Paragraph 32, 942 characters).

Another difficulty underlined by the teachers is the presence of groups of diverse and excessively numerous students, which they understand can slow progress. Similarly, the extra work load for the teachers in formative assessment is another of the difficulties expressed, as well as the lack of interest of the students when faced with new proposals like self-assessment and reflexion.

I have been asked, why at the end of the unit there is in fact a reflexion on what you have learned, etc. etc., …if they had to do that. And I told them, well, it is very advisable because it means a personal reflexion. (Section 0, Paragraph 25, 308 characters).

From the quantitative data it can be seen that the mean of item 11 referring to formative assessment is M = 3.2, even though the relatively high deviation (SD = 1.4) and the analysis of the frequencies show a great disparity of opinions: a quarter think that there is a frequent use (26,7%) and a little fewer that there is scant use (21.7%). Shared assessment (Item16) is less present: the mean is the second lowest in the study (M = 2.5) and the sum of frequencies is never or almost never over 50% (Table 1).

On Information tasks and attention to the assessment.

The qualitative data evidence that there is the belief that in general the teachers seem to give little importance to the assessment process in comparison with their other teaching tasks, although, the load devoted to correcting is very high. They think that teachers in general do not submit to a process of assessment by others, and they also think that there are great differences between a teacher profile of few active interventions and which tend to hinder anything that means a new development in the assessment models, and another profile of teachers focused on improvement and constant innovation. They basically relate these differences to the personal characteristics of the teachers, although also with their profound and entrenched conceptions and beliefs about education.

However, despite the teachers, in some cases, seeming to focus less on the essentials of an adequate assessment, there is evidence that the repercussions of the teachers’ work have very important emotional effects on the students’ learning process, and this idea, which is revealed in isolation in the qualitative data, is a belief of great interest to continue to explore in educational research.

But this I don’t know if it is fear of failing, fear of making a fool of oneself…I don’t know (Section 0, Paragraph 85,79 characters.)

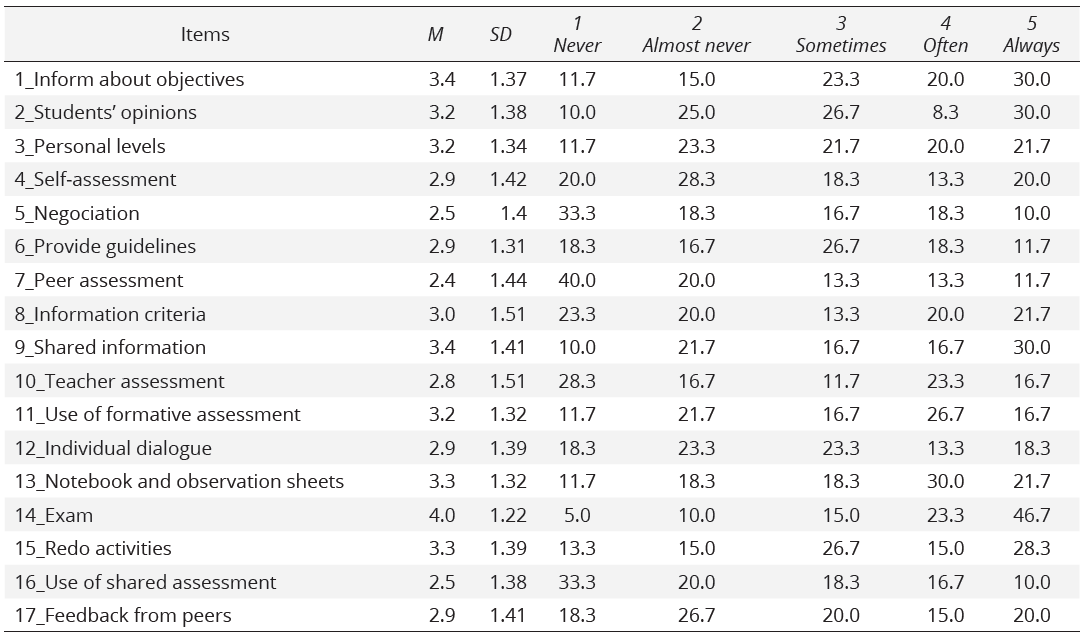

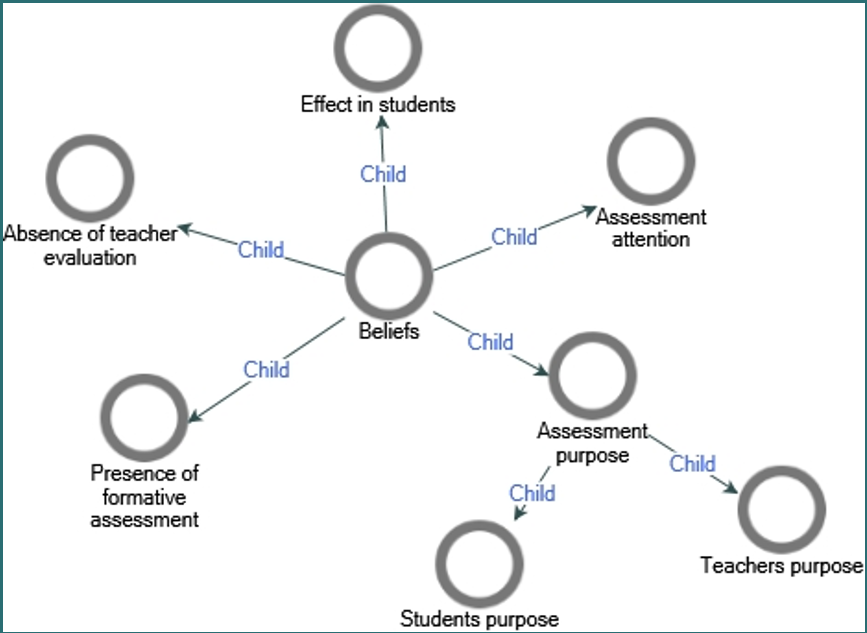

Finally, it is clear that the teachers opt for a model of continuous assessment, but not with a formative and shared purpose but rather with a summative, normative and disciplinary aim. The prior experience of the teachers of traditional assessment systems during their university training and SE is responsible for this. (Figures 1 and 2).

Figure 1

Applications and use of formative assessment in secondary education teachers

From the quantitative data and related to the management of the information (Items 1 and 8), in the opinion of the students it is clear that the SE teachers mostly inform the students about the educational objectives (only 11.7% thought that they never informed them); while 23.3% thought that they never inform them about the assessment criteria, which reflects a possible lack of attention on the part of the teachers to the assessment task.

Similarly, they perceive that there is variability among the SE teachers regarding evaluating their own teaching intervention (Item 10) as it never happens (28.33%), or it almost always occurs with 23.33%, with a M = 2.8 from 5 points (SD =1.51) which offers a clear idea of the dispersion of the replies (Figure 1).

Participation of the students and assessment agents. In this case there is also a great deal of heterogeneity in perceptions, on both if the students’ opinion for the improvement of the teaching (Item 2) is taken into account, and if the students are allowed to establish their own levels for improvement (Item 3). There are schools in which the students’ opinion for improving the teaching is always or often taken into account (adding up to 38.3%) and others in which it is never or almost never taken into account (adding up to 35%); equally, there are schools in which they always or almost always establish their own levels for improvement (adding up to 41.7%) and schools in which this never or almost never happens (adding up to 35%).

This heterogeneity also appears in the use of self-assessment (Item 4); half the students indicate that self-assessment is never or almost never used in the schools.

Peer assessment (Item 7) achieved the lowest mean of the study M = 2.4 (SD = 1.44) and also presents dispersion. However, peer assessment as a feedback activity among classmates (Item 17) achieved a higher mean (M = 2.9; SD = 1.41).

The qualitative data obtained also showed heterogeneity, although it is confirmed that regarding the agents involved in the assessment process, the protagonists are the teachers, even though in some cases there are glimpses of negotiation with the students, as in the weighting of the tests, in the shared assessment experiences using peer assessment, and also, in self-assessment, but more oriented towards the traditional objective of reviewing the subject contents to pass the written test and, to a lesser extent, for grading. However, there appear to be isolated cases in which, despite considering the risks, students are allowed to fully participate in part of the grading.

Well, I have used it … it is also dangerous, because obviously, if they give the mark, the prisoner’s dilemma, that you study in economics. If they all agree, in the end they all can pass. But I have realised that in the end, they are almost more demanding than I am. Because in the end the large group dilutes the mark, and the real mark emerges. So, it would be good to establish some limits. I use 40 for them and 60 for me, but there can always be other ways to do it. But I think that it is good that they co-assess because you make them the protagonists. (Section 0, Paragraph 74, 610 characters).

The quantitative data reveal that the teachers quite often use a type of feedback for correcting errors and grading information which varies among teachers, regarding the moment and the way of giving this feedback. Some do it with oral group comments, individual written comments, publicly in front of the whole group, privately, using the platform for parents, new technologies, or with activities and debates carried out with the group of students.

The high work load this feedback implies for the teachers and the stress it causes them is identified.

Let’s see, it means that you spend the whole day correcting (others in unison), it is all day. Because in our case the tests are extensive, let’s say, you give them written comprehension, oral comprehension, grammar, vocabulary, the essay, and then the oral… so you never finish. (Section 0, Paragraph 70, 299 characters).

Assessment means and moments. The students perceive that the exams or performance tests (Item 14) are the means always or almost always used by the teachers (adding up to 75%), with the highest mean in the study (M = 4.0; SD = 1.22) and the least dispersion. Also, half the students observe the use of workbooks and observation sheets (Item 13).

The above-mentioned data are confirmed by the qualitative data which identify that the main assessment means used among teachers is the traditional one, as they prioritise the written test, even though it seems to be common that they complement it with other types of means with less weight, with which they try to attend to the diversity of the students and decrease in some way the pressure of a single test. In certain cases, the traditional written test, similarly, is designed using exercises of different types to attend to the levels and diversity of the students.

…if for example you try to avoid the student’s rejection of this subject, give them a certain percentage for behaviour, work in class, and well certain projects which are voluntary and which they are asked for during the course, and well also the workbook, that you ask for… (Section 0, Paragraph 14, 339 characters).

Figure 2

Applications of formative assessment in secondary education

Furthermore, it seems that the teachers identify the more innovative and technological assessment means as nearer to formative assessment. Among the innovative assessment means there are learning diaries graded using rubrics, PBL (Project based learning) projects with group assessment and oral presentations; and among the technological means, questions using Plikers and Kahoot (IT applications for quick replies and feedback). These latter means present advantages and disadvantages, the advantage is that they are attractive for the students, and the disadvantage is that the students do not perceive that they are learning and even can think that the teachers are not doing their job of giving classes, which is what they should be doing according to the traditional conception.

I believe that you shouldn’t overuse the innovations either, because if not it seems that you don’t give classes, that you are playing all day and obviously the kids need to perceive that they are learning. Because, of course, if you are all day with Kahoots, and Plickers... (Section 0, Paragraph 167, 262 characters).

With regard to the moments when assessment is made, continuous models seem to coexist with final exam models. However, the continuous assessment models that exist in schools are very diverse and there is a certain lack of definition among the teachers about the concept of continuous and formative assessment. Some teachers use written tests for their continuous assessment, others compartmentalise their contents for assessment, others weight the different assessment means or continuous performance tests used and there are some who carry out final written tests, but that in turn they complement with continuous assessment using other methods.

In my case I usually do what is established in the department programme, one test every two teaching units, but what I certainly do, is that during the two months that the period usually lasts up to the exams/ until we examine them, until they do the assessment of the two teaching units, I do tests, I continually do tests, and what I also continually do is to take notes of the classifications, of the student’s participation in class. Yes, I do that. (Section 0, Paragraph 11, 486 characters)

However, it is recognised that this continuity is more summative than formative and, in the cases where there is only a final assessment, it is justified because the prime objective of the assessment is based on the need to grade the student, measuring and weighting their learning results.

…well, we do final tests obviously, we do exams at the end of the assessment, because of course, in the end you need to grade the student. (Section 0, Paragraph 17, 243 character).

The data from the study show the existence of a great deal of disparity in the use of formative assessment in the schools, in the opinion of the students in their practicums and of the SE teachers. However, it seems clear that summative assessment is predominant in SE, as well as exams and performance tests, methods which are nearer to traditional conceptions of assessment and which limit the action of innovative teachers who desire to implement formative assessment.

Discussion

The study confirms that the case studies on the use of assessment by SE teachers is diverse. This coincides with the data obtained by Lukas et al., (2006). Similarly, a duality can be observed which is materialised in traditional assessment models compared to alternative ones, as stated by Chaparro & Pérez (2010), López Pastor (2006) or Prieto (2015). It also coincides with the study by Espinel (2017) which confirms that the SE teachers do not generally apply formative and shared assessment, although it is recognised that there is interest among a determined group of teachers in knowing about and innovating in assessment, despite not having clear and precise knowledge of how to progress in its implementation.

Our study highlights that the greatest difficulty for the progress and development of formative assessment is the lack of commitment of the teachers, whether due to their personality or their traditionalist beliefs, and not so much because of their age or experience; this last result coincides with that of the study by Espinel (2017).

In contrast, the coincidence is very clear with other studies that the teacher is the sole protagonist as the assessing agent, and there are very few assessment experiences of peer and self-assessment (Espinel, 2017; Hernández Abenza, 2010; Lukas et al., 2006). However, in our study there is evidence of a resurgence of peer assessment activities among the committed and innovative teachers using new technologies, even though the same is not seen in peer grading.

For Castejón et al.(2011), the instruments are not in essence more or less formative but rather their use is usually associated with a more or less traditional orientation of teaching. In our study the use of traditional assessment instruments like the classic exams is undoubtedly more frequent, than those nearer to formative models like learning folders, as determined by other authors (Lukas et al, 2006; Toribio Briñas, 2010; Espinel, 2017) who detect the predominance and the importance that the teachers still give to the exams. On occasions, they do them in a more continuous manner, but with a view to the finals, thus placing themselves, mainly on the side of continuous and summative, rather than formative, assessment.

Like Canabal & Margalef (2017), Hattie & Timperley (2007), Stobart (2010), and Nicol & MacFarlane (2006), we understand that feedback is one of the most valuable formative strategies for learning. In this study different forms, types and frequencies of feedback have been detected, as well as an acute perception of work load for this teaching task for the teachers, although it has not been possible to recognise if this feedback is specific or unspecific, if it is directed to the ego or the task and if it presents formative effectiveness or not (Voerman et al., 2012).

This last aspect would be a very interesting future line of research in this study, as well exploring the use of formative assessment in a larger number of SE teachers and schools in other autonomous regions; also supporting formative and shared assessment starting from experiences of research action in SE schools and in the degrees and master degrees in initial teacher training, as proposed at the time by Coll & Remesal (2009).

Conclusions

Secondary education is strongly standardised in our country, but from the research little is known about the day-to-day reality of teachers in their schools on the everyday routine of their tasks involving assessment.

This study connects the students of the Teaching Master’s Degree, imbued with cutting-edge training and theoretical ideals, with a real context in which secondary education teachers have been adapting their teaching according to the complex reality of the educational institution in which they work, to the parents of the students at the corresponding educational level, and to the students, adolescents with difficult to manage social and affective relations. The view of the students, future professionals trained in the Teaching Master’s Degree, is combined with that of the expert SE teachers. Both visions are compared to progress and understand how assessment is developing in SE, constituting an important contribution of this study.

From the perspective of the students in their practicums and the SE teachers, the existence of great disparity in the use of formative assessment in schools is ratified. However, it seems clear that summative assessment predominates in SE, as well as exams and performance tests.

From this it is clear that the panorama of formative assessment is very heterogeneous; there is incipient interest among teachers with a determined profile, but they lack clear clues as to its correct design for SE. Similarly, there is evidence of difficulty for the implementation of formative assessment because of the high number of students in the classes; the traditionalist conceptions of teachers, students and families; for norms that translate into exams and performance tests as the main assessment means; as well as the work load involved in the feedback, all of which stresses the teachers. The opportunity for implementing authentic formative assessment, seems to reside in encouraging and supporting the involved enthusiastic and innovative teachers so that they feel supported by their schools and break with the traditionalist conception of assessment

This investigation includes the viewpoint of the students, future professionals trained in the Teaching Master’s Degree, which is combined with that of the expert SE teachers. Both visions are compared to advance and understand how assessment is developed in SE, which constitutes an outstanding contribution of this study.

Equally, a complex reality is brought into focus without the intention of generalising the results, which could be a limitation as the same instruments were not applied to the two groups of respondees.

However, this suggests a need to carry out further research centred on SE, given the important questions put forward in this study that should continue to be dealt with in greater depth.

Funding

Grant RTI2018-093292-B-I00 funded by MCIN/AEI/ 10.13039/501100011033 and, by “ERDF A way of making Europe”.

References

Álvarez Méndez, J. M. (2001). Evaluar para conocer, examinar para excluir. Morata.

Barrientos, E., López Pastor, V. M., & Pérez Brunicardi, D. (2019). ¿Por qué hago evaluación formativa y compartida y/o evaluación para el aprendizaje en EF? La influencia de la formación inicial y permanente del profesorado. Retos: nuevas tendencias en educación física, deporte y recreación, 36, 37-43. https://recyt.fecyt.es/index.php/retos/article/view/66478

Biggs, J. (2005). Calidad del aprendizaje universitario. Narcea.

Bisquerra, R. (1989). Métodos de investigación educativa: guía práctica. EAC.

Bunniss S., & Kelly D. R. (2010). Research paradigms in medical education research. Medical Education, 44, 358–366. https://doi.org/10.1111/j.1365-2923.2009.03611.x

Burke, D. (2009). Strategies for using feedback students bring to higher education. Assessment & Evaluation in Higher Education, 34(1), 41-50. https://doi.org/10.1080/02602930801895711

Canabal, C., & Margalef, L. (2017). La retroalimentación: la clave para una evaluación orientada al aprendizaje. Profesorado. Revista de Currículum y Formación de Profesorado, 21(2), 149-170. http://www.redalyc.org/pdf/567/56752038009.pdf

Carless, D. (2006). Differing perceptions in the feedback process. Studies in Higher Education, 31(2), 219-33. https://doi.org/10.1080/03075070600572132

Carless, D., Salter, D., Yang, M., & Lam, J. (2011). Developing sustainable feedback practices. Studies in Higher Education, 36(4), 395-407. https://doi.org/10.1080/03075071003642449

Castejón, J., Capllonch, M., González, N., & López Pastor, V. M. (2011). Técnicas e instrumentos de evaluación. En V. M. López Pastor (Coord.), Evaluación formativa y Compartida en Educación Superior (pp. 45-64). Narcea.

Chaparro, F., & Pérez, A. (2010). La evaluación en Educación Física: enfoques tradicionales versus enfoques alternativos. Efdeportes, 140. https://goo.gl/3GoiZh

Coll, C., & Remesal, A. (2009). Concepciones del profesorado de matemáticas acerca de las funciones de la evaluación del aprendizaje en la educación obligatoria. Infancia y Aprendizaje, 32(3), 391-404. https://doi.org/10.1174/021037009788964187

Cresswell, J. W. (2012). Educational Research: planning, conducting and evaluating quantitative and qualitative research. Pearson Education.

Cresswell, J. W., & Plano Clark, V. L. (2011). Designing and Conducting Mixed Methods Research. Sage Publications.

Espinel, P. A. (2017). Evaluación formativa y compartida y modelo competencial en Secundaria: estudios de caso en la materia de Educación Física. Tesis doctoral. Universidad Católica de Murcia. http://repositorio.ucam.edu/handle/10952/2564

Flick, U. (2004). Introducción a la investigación cualitativa. Morata.

Gimeno-Sacristán, J. (2012). ¿Por qué habría de renovarse la enseñanza en la universidad? En J. B. Martínez (Coord.), Innovación en la universidad. Prácticas, políticas y retóricas (pp. 27-51). Graó.

Glasser, A., & Strauss, C. (2002). Bases de la investigación cualitativa. Técnicas y procedimientos para desarrollar la teoría fundamentada. Universidad de Antioquía.

González Monteagudo, J. (2001). El paradigma interpretativo en la investigación social y educativa: nuevas respuestas para viejos interrogantes. Cuestiones pedagógicas, 15, 227-246. https://goo.gl/s3ohNP

Gutiérrez, C. (2017). Una experiencia de evaluación formativa en la asignatura educación física en la enseñanza secundaria. En V. M. López Pastor y Á. Pérez Pueyo (Coords), Evaluación formativa y compartida en educación: experiencias de éxito en todas las etapas educativas (pp. 414-421). Universidad de León, Secretariado de Publicaciones. https://buleria.unileon.es/

Hamodi, C., López, V. M., & López, A. T. (2017). If I experience formative assessment whilst studying at university, will put it into practice later as a teacher? Formative and shared assessment in Initial Teacher Education (ITE). European Journal of Teacher Education, 40(2), 171-190. https://doi.org/10.1080/02619768.2017.1281909

Hattie, J., & Timperley, H. (2007). The power of feedback. Review of Educational Research, 77(1), 81-112. https://doi.org/10.3102/003465430298487

Hernández Abenza, L. (2010). Evaluar para aprender: hacia una dimensión comunicativa, formativa y motivadora de la evaluación. Enseñanza de las Ciencias, 28(2), 285-293. https://doi.org/10.5565/rev/ec/v28n2.54

Hernando, A., Hortigüela, D., & Pérez, A. (2017). El proceso de evaluación formativa en la realización de un “video tutorial” de estiramientos en inglés en un centro bilingüe. En V. M. López Pastor y Á. Pérez Pueyo (Coords), Evaluación formativa y compartida en educación: experiencias de éxito en todas las etapas educativas (pp. 260-268). Universidad de León, Secretariado de Publicaciones. https://buleria.unileon.es/

Hidalgo, N., & Murillo, F. J. (2017). Las concepciones sobre el proceso de evaluación del aprendizaje de los estudiantes. REICE: Revista Iberoamericana sobre Calidad, Eficacia y Cambio en Educación, 15(1), 107-128.

Lincoln, Y. S., & Guba, E. G. (1985). Naturalistic Inquiry. Sage Publications.

Ley Orgánica 2/2006, de 3 de mayo, de Educación (LOE). BOE núm. 106, de 4 de mayo de 2006.

Ley Orgánica 8/2013, de 9 de diciembre, para la Mejora de la Calidad Educativa (LOMCE). BOE núm. 295, de 10 de diciembre de 2013.

Ley Orgánica 3/2020, de 29 de diciembre, por la que se modifica la Ley Orgánica 2/2006, de 3 de mayo, de Educación (LOMLOE). BOE» núm. 340, de 30 de diciembre de 2020.

López Pastor, V. M. (2005). La participación del alumnado en la evaluación: la autoevaluación, la coevaluación y la evaluación compartida. Tándem, 17, 1-8.

López Pastor, V. M. (2006). La evaluación en Educación Física. Revisión de los modelos tradicionales y planteamiento de una alternativa: la evaluación formativa y compartida. Miño y Dávila.

López Pastor, V. M. (Coord.). (2011). Evaluación formativa y compartida en Educación Superior: Propuestas, técnicas, instrumentos y experiencias. Narcea.

López Pastor V. M., & Pérez Pueyo, A. (Coords.) (2017). Evaluación formativa y compartida en Educación: experiencias de éxito en todas las etapas educativas. León: Universidad de León. http://buleria.unileon.es/handle/10612/5999

Lorente, E., & Kirk, D. (2013). Alternative democratic assessment in PETE: an action-research study exploring risks, challenges and solutions. Sport, Education and Society, 18, 77-96. https://doi.org/10.1080/13573322.2012.713859

Lukas, J. F., Santiago, K., Joaristi, L., & Lizasoain, L. (2006). Usos y formas de la evaluación por parte del profesorado de la ESO. Un modelo multinivel1. Revista de Educación, 340, 667-693. https://goo.gl/Pmpdiv

Nicol, D. J., & MacFarlane, D. (2006). Evaluación formativa y aprendizaje autorregulado: un modelo y siete principios de buena práctica de retroalimentación. Estudios en Educación Superior, 31(2), 199-218. https://doi.org/10.1080/03075070600572090

OCDE (1999). La definición y selección de competencias clave (DeSeCo). Resumen ejecutivo. https://goo.gl/p554ck

Parlamento Europeo, 2006 Recomendación Del Parlamento Europeo y del Consejo de 18 de diciembre de 2006 sobre las competencias clave para el aprendizaje permanente (2006/962/CE). https://eur-lex.europa.eu/LexUriServ/LexUriServ.do?uri=OJ:L:2006:394:0010:0018:ES:PDF

Prieto, A. (2015). Los paradigmas de la evaluación en Educación Física. Multitarea. Revista de Didáctica, 7, 110-130. https://goo.gl/6728W7

Red de Evaluación Formativa y Compartida en Educación (REFYCE, 2020) https://redevaluacionformativa.wordpress.com/

Reichardt, C. S., & Cook, T. D. (1986). Hacia una superación del enfrentamiento entre los métodos cuantitativos y los cualitativos. En T. D Cook y C. S. Reichardt (eds.), Métodos cualitativos y cuantitativos en investigación evaluativa (pp. 25-58). Morata.

Remesal, A. (2011). Primary and secondary teachers’ conceptions of assessment: A qualitative study. Teaching and Teacher Education, 27(2), 472-482. https://doi.org/10.1016/j.tate.2010.09.017

Stobart, G. (2010). Tiempos de pruebas. Los usos y abusos de la evaluación. Morata.

Strauss, A., & Corbin, J. (2002). Bases de la investigación cualitativa: técnicas y procedimientos para desarrollar la teoría fundamentada. Universidad de Antioquía.

Toribio Briñas, L. (2010). Las competencias básicas: el nuevo paradigma curricular en Europa. Foro de Educación, 12, 2010, 25-44. https://goo.gl/Dgyimh

Vázquez, B., Jiménez, R., & Mellado, V. (2016). ¿El tiempo garantiza el cambio en el profesorado? Estudio de un caso centrado en la evaluación de aprendizajes. Revista Electrónica Interuniversitaria de Formación del Profesorado, 19(2), 139-154. https://goo.gl/sjfTRJ

Vázquez Cano, E. (2012). La evaluación del aprendizaje en primaria y secundaria: los indicadores de evaluación. Espiral. Cuadernos del Profesorado, 5(10), 30-41. http://www.cepcuevasolula.es/espiral.

Voerman, L., Meijer, P. C., Korthagen, F. A. J., & Simons, P. R. J. (2012). Types and frequencies of feedback interventions in classroom interaction in secondary education. Teaching and Teacher Education, 28, 1107-1115. https://doi.org/10.1016/j.tate.2012.06.006

Zabalza, M. A. (2012). El estudio de la «buenas prácticas» docentes en la enseñanza universitaria. Revista de Docencia Universitaria, 19(1), 17-42. https://doi.org/10.4995/redu.2012.6120

Author notes

* Correspondence: Mª Rosario Romero-Martín, rromero@unizar.es

Additional information

How to cite this article: Asún Dieste, S., Romero-Martín, M. R., Cascarosa Salillas, E., & Iranzo Navarro, I. (2023). Assessment in Secondary Education, is it formative and shared? Exploring perceptions of professionals and future professionals in Education. Cultura, Ciencia y Deporte, 18(55), 191-213. https://doi.org/10.12800/ccd.v18i55.1956

ISSN: 1696-5043

Vol. 18

Num. 55

Año. 2023

Assessment in Secondary Education, is it formative and shared? Exploring perceptions of professionals and future professionals in Education

SoniaMª RosarioEstherIsabel Asún DiesteRomero-MartínCascarosa SalillasIranzo Navarro

University of Zaragoza,Spain